Serverless Computing for Beginners: The Ultimate 2024 Guide

Let’s be honest—spending countless hours wrestling with server infrastructure instead of writing actual code is incredibly frustrating. If you are a developer eager to deploy applications without the constant headache of provisioning and maintaining servers, you have landed in exactly the right spot. Mastering serverless computing for beginners is your ticket to unlocking lightning-fast development cycles, effortless scaling, and noticeably lower operational costs.

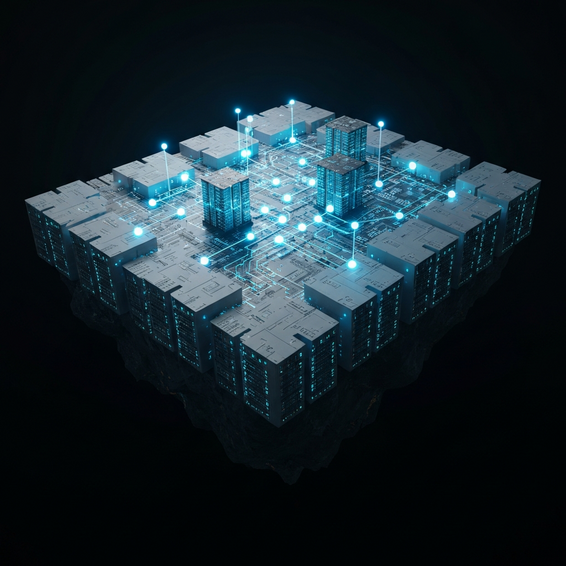

What is serverless computing? At its core, serverless is a cloud-native model that completely hands off infrastructure management, server provisioning, and network configuration to cloud providers. Instead of tinkering with backend settings, developers simply write their code and pay only for the exact compute time they use. It’s a beautifully efficient, pay-as-you-go approach tailor-made for modern software development.

Throughout this comprehensive guide, we will strip away the jargon and break down what serverless actually entails, why it eliminates major development bottlenecks, and how you can start using it right away. Whether you are building projects solo or collaborating within a growing DevOps team, the insights ahead will position you for long-term success in the cloud computing era.

Understanding Serverless Computing for Beginners: The Problem with Traditional Servers

Before you can fully appreciate the magic of serverless architecture, it helps to look at the exact headaches it cures. Not too long ago, deploying a web application meant provisioning either a clunky physical server or a virtual machine (VM). While functional, this old-school method comes packed with hidden complexities and a never-ending list of maintenance chores.

For starters, traditional servers demand constant babysitting. You are perpetually on the hook to patch operating systems, tweak security protocols, and nervously monitor uptime metrics. Imagine your app suddenly goes viral—a standard server is highly likely to buckle under the sudden traffic spike unless you’ve manually scaled it up in advance. This stressful balancing act pulls developers away from building out core backend features, forcing them into the role of full-time sysadmins.

Then there is the harsh reality of scalability and cost. Scaling horizontally in a legacy setup involves manually spinning up new instances, configuring load balancers, and praying your database connections survive the surge. Worse yet, you are footing the bill for idle time. Paying to run a server 24/7 when it only handles traffic for a few hours a day is like leaving your car running overnight just in case you need to drive. Serverless computing wipes out these financial and technical hurdles by entirely abstracting the server layer away.

Basic Solutions: Getting Started with Serverless Architecture

Making the leap from legacy servers does not have to feel overwhelming. If you are exploring serverless computing for beginners, the smartest move is to start with simple implementations that deliver quick wins. By following a few straightforward steps, you can confidently deploy your first function without hitting a massive learning curve.

- Choose a Cloud Provider: Kick things off by setting up an account with one of the heavy hitters. AWS, Google Cloud, and Azure all provide rock-solid serverless platforms, complete with generous free tiers that are ideal for experimentation.

- Write a Simple Function: Draft a straightforward script using Node.js or Python. Designed to execute one specific task, this will serve as your very first FaaS (Function-as-a-Service) deployment.

- Deploy Using AWS Lambda: Go ahead and upload that script to AWS Lambda (or your chosen provider’s equivalent). Behind the scenes, the service will instantly spin up the perfect execution environment for your code.

- Trigger the Function: Finally, configure an API Gateway to trigger your new code via a standard HTTP request. Just like that, you have successfully launched a scalable, fully serverless API!

Beyond functions, tapping into serverless storage is another fantastic early win. Platforms like Amazon S3 or Google Cloud Storage let you effortlessly host static frontend assets—think HTML, CSS, and JavaScript—without ever wrestling with web servers like Nginx or Apache. When you pair a static frontend with a serverless backend API, you end up with a highly resilient application that requires absolutely zero traditional maintenance.

Advanced Solutions: Scaling Your Backend Development

After getting comfortable with the basics, it is time to view serverless through a more advanced, IT-focused lens. As user demand grows, patching together a single standalone function simply won’t cut it anymore. Instead, you need to architect a system capable of handling complex workflows, processing massive data volumes, and seamlessly integrating with modern CI/CD pipelines.

A highly effective strategy at this stage is embracing event-driven architectures. Rather than relying solely on synchronous HTTP requests, you can route data through message queues like Amazon SQS or event buses such as EventBridge. Doing so empowers your functions to process heavy tasks asynchronously in the background, which dramatically boosts both the fault tolerance and the overall responsiveness of your applications.

When it comes to serious backend development, baking in automated CI/CD pipelines is non-negotiable. Top-tier serverless ecosystems thrive on seamless automation. By hooking up tools like GitHub Actions or GitLab CI, you can trigger automated tests and deploy functions the exact second your code merges into the main branch. Not only does this eliminate human error, but it also accelerates your release cycles immensely.

Furthermore, dealing with application state requires a bit of a mindset shift. Because serverless functions are entirely stateless and ephemeral—meaning they spin up and die quickly—you need databases built specifically for fluid scale. Integrating a purpose-built serverless database like Amazon DynamoDB guarantees that your storage layer will expand harmoniously alongside your compute needs, avoiding nasty connection limits and frustrating data bottlenecks.

Best Practices for Serverless Deployments

While it is true that the cloud provider does the heavy lifting with the hardware, you are still firmly in the driver’s seat when it comes to code optimization. Sticking to a few core best practices will ensure your new DevOps infrastructure stays secure, lightning-fast, and remarkably cost-effective over the long haul.

- Optimize Cold Starts: A “cold start” occurs when an idle function experiences slight latency because the provider is busy booting up a fresh container. You can mitigate this delay by shrinking your deployment packages and aggressively pruning unnecessary external dependencies.

- Implement Least Privilege Security: Be incredibly strict with IAM permissions. If a specific function only requires read access to a single database table, absolutely never grant it global write permissions across your environment.

- Manage Environment Variables Securely: Hardcoding API keys or database passwords into your scripts is a recipe for disaster. Always rely on robust tools like AWS Secrets Manager to inject your sensitive configurations dynamically during runtime.

- Monitor and Set Alarms: The double-edged sword of a pay-as-you-go model is that a minor code bug—like an accidental infinite loop—can rack up a staggering cloud bill overnight. Proactively use monitoring suites to establish strict billing alarms and keep a close eye on execution metrics.

- Use Infrastructure as Code (IaC): By leveraging platforms like Terraform or the Serverless Framework, you can define your entire architecture using simple text files. This approach guarantees that your deployments are fully version-controlled, highly repeatable, and incredibly safe.

Recommended Tools and Resources

You simply cannot build a bulletproof serverless application without the proper toolkit. Below are a few standout platforms and frameworks guaranteed to streamline your daily workflow and supercharge your overall productivity as a developer. (Note: Some links below may be affiliate links.)

- AWS Lambda: Widely considered the gold standard for executing code without server provisioning. It natively integrates with almost everything inside the Amazon Web Services ecosystem.

- The Serverless Framework: A brilliant open-source utility that radically simplifies how you configure, build, and push APIs or background tasks across multiple different cloud providers simultaneously.

- Vercel: A dream come true for frontend developers. Vercel delivers a hyper-fast serverless edge experience that is tailor-made for modern JavaScript frameworks like Next.js and React.

- Supabase: Often hailed as the premier open-source Firebase alternative, Supabase bundles a managed serverless Postgres database with edge functions, making full-stack app launches a total breeze.

- Datadog: An absolute must-have for monitoring. It grants you unparalleled observability into complicated serverless logs, live performance metrics, and detailed distributed traces.

FAQ Section

What exactly is serverless computing?

Think of it as a cloud deployment model where your chosen vendor fully takes over the heavy lifting of allocating and provisioning servers. Your only job is to write high-quality code. Meanwhile, the vendor dynamically handles all the infrastructure routing, capacity scaling, and routine maintenance quietly in the background.

Do serverless applications still use servers?

Absolutely—physical servers are still very much involved in processing your code. The catchy term “serverless” just means the frustrating aspects of managing them (like capacity forecasting and late-night OS patching) are totally abstracted away from you. The cloud provider owns, houses, and protects the physical hardware on your behalf.

Is serverless computing cheaper than traditional hosting?

For the vast majority of use cases, yes. Thanks to its strict pay-as-you-go billing model, you are only charged for the precise milliseconds your code is actively running. If you launch a project and it gets zero traffic on day one, your compute bill basically drops to zero. That makes it incredibly cost-efficient when compared to a traditional server eating up electricity 24/7.

What are the main limitations of serverless architecture?

The most common drawbacks include those pesky cold starts (the brief delay when a dormant function wakes up to handle a request), the risk of vendor lock-in, and the added complexity of setting up local testing environments. Plus, serverless platforms generally are not the best fit for continuous, heavy-duty background tasks like massive video rendering.

Conclusion

Taking the plunge into serverless computing for beginners really does have the power to revolutionize how you think about software architecture. When you strip away the massive, time-consuming burden of maintaining traditional servers, you are finally free to focus on what matters most: building robust backend features, delivering faster updates, and providing unmatched value to your users.

Try starting small by pushing a single FaaS function live, and then organically introduce advanced concepts like event-driven messaging, automated CI/CD pipelines, and fluid NoSQL databases. Just remember to always keep security best practices top of mind, and never take your eyes off your infrastructure monitoring tools. Embrace the serverless cloud revolution, and start elevating your daily development workflow today!