How to Automate Deployments with GitHub Actions

Let’s be honest: manual deployment processes are a massive bottleneck for software teams in today’s fast-paced development world. You likely spend hours writing code, testing it locally, and making sure every single feature works flawlessly. Yet, right when it is finally time to push to production, you hit a wall. Suddenly, you are stuck wrestling with clunky FTP clients, managing complex SSH keys, or relying on fragile server scripts that always seem to break at the worst possible moment.

If you are completely exhausted by these repetitive, error-prone tasks, it is definitely time to learn how to automate deployments with GitHub Actions. As a powerful, built-in CI/CD (Continuous Integration and Continuous Deployment) platform, this tool gives developers the ability to run complete software workflows right alongside their codebase.

It really does not matter if you are managing a small personal passion project, a massive enterprise-level application, or a sprawling DevOps environment. Automation will completely transform how you handle software delivery. By the time you finish reading this guide, you will know exactly how to set up robust deployment pipelines, practically eliminate human error, and ship your code to production with absolute confidence.

Why Knowing How to Automate Deployments with GitHub Actions Matters

Have you ever wondered why manual deployments fail so often? From a technical standpoint, the root issues usually boil down to human error and environmental drift. When a developer handles a push manually, they have to memorize and execute a very strict sequence of commands. Just one missed step—whether that is forgetting to run a crucial database migration or failing to compile your assets—is all it takes to bring a live application crashing to a halt.

On top of that, manual updates almost always lead to the classic “well, it works on my machine” excuse. The reality is that local development setups rarely match the precise configurations, dependency versions, or operating systems used on production servers. If you aren’t using an automated pipeline, these subtle mismatches easily slip by unnoticed—at least until the application unexpectedly breaks in the real world.

Beyond the technical risks, manual deployments offer zero visibility and absolutely no audit trail. Imagine a deployment failing at 2:00 AM; without a centralized log, you have no way of knowing exactly what went wrong or who even initiated the push. Shifting to an automated workflow completely removes this guesswork. It helps you maintain a consistent state while giving your entire engineering team valuable insight into the application’s lifecycle.

Quick Fixes / Basic Solutions

Believe it or not, getting started with your very first automation pipeline is surprisingly simple. You certainly do not need to be a certified cloud architect to put together a basic deployment workflow. Just follow these actionable steps to create your first action:

- Create the Workflow Directory: Start at your project’s root folder and create a new directory path:

.github/workflows. This specific folder is exactly where GitHub looks to find your automation scripts. - Define the YAML File: Inside that new folder, go ahead and create a configuration file called

deploy.yml. YAML happens to be the standard data serialization language developers use for writing GitHub workflows. - Set Up the Trigger: Next, you need to tell GitHub exactly when it should run your script. By using the

on: pushdirective, you ensure that the workflow triggers automatically the moment new code gets merged into your main branch. - Checkout the Code: Take advantage of the pre-built

actions/checkout@v4action. This is an essential step, as it pulls your repository’s code straight into an isolated runner environment so it can actually be processed. - Run Deployment Commands: Finally, just add in the specific steps your project requires to build, test, and securely deploy the application via SSH or an API.

To make things even easier, here is a basic template to give you a clear starting point:

name: Basic Deploy Workflow

on:

push:

branches:

- main

jobs:

deploy:

runs-on: ubuntu-latest

steps:

- name: Checkout Code

uses: actions/checkout@v4

- name: Setup Node.js

uses: actions/setup-node@v4

with:

node-version: '20'

- name: Install and Build

run: |

npm install

npm run build

- name: Deploy via SSH

uses: appleboy/scp-action@master

with:

host: ${{ secrets.SERVER_HOST }}

username: ${{ secrets.SERVER_USER }}

key: ${{ secrets.SERVER_KEY }}

source: "dist/*"

target: "/var/www/html/app"That simple configuration completely resolves the immediate need for automation. From now on, you just push your code, and GitHub seamlessly takes care of all the heavy lifting in the background.

Advanced Solutions

Once you feel comfortable with the basics, it is time to start exploring more complex, enterprise-level strategies. Advanced automated deployments usually require managing several staging environments, securely handling sensitive secrets, and configuring conditional logic specifically tailored for Cloud Deployment.

Matrix Builds for Multi-Environment Testing: Did you know you can configure GitHub Actions to test and deploy your codebase across multiple operating systems or language versions at the exact same time? By setting up a build matrix, your pipeline can run necessary checks on Node.js 18, 20, and 22 completely in parallel. This guarantees that your application is highly resilient long before it ever reaches production.

Staging vs. Production Workflows: Rather than pushing code straight to production, modern IT teams tend to rely on multi-stage pipelines. For instance, you can set up distinct deployment jobs for your staging environments that automatically trigger whenever someone pushes to a develop branch. Then, for the actual production environment, you can implement manual approval gates. This requires a senior developer to physically click “Approve” before any code goes live.

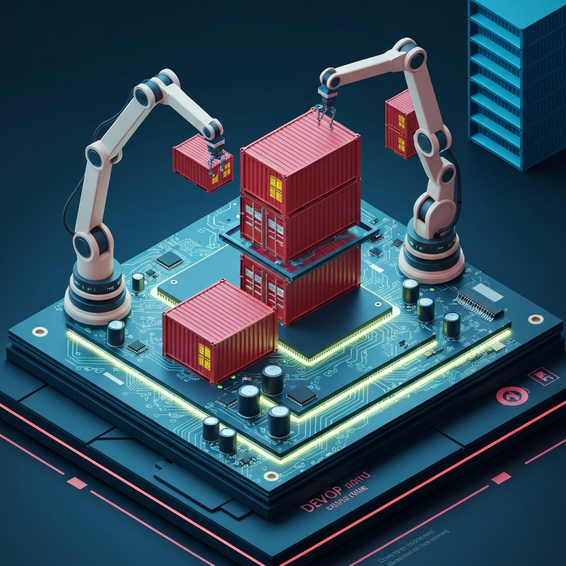

Docker and Kubernetes Integrations: The most advanced pipelines out there rely heavily on containerization. You can easily build your Docker images right within a GitHub Actions runner, then push them out to container registries like AWS ECR, GitHub Container Registry, or Docker Hub. From that point, your workflow can initiate rolling deployments across your Kubernetes clusters, helping you achieve true zero-downtime updates.

Automated Slack Notifications: Team visibility is absolutely crucial. By tying Slack webhooks directly into your pipeline, your DevOps crew will receive instant notifications regarding build successes or unexpected failures. This guarantees an immediate, coordinated response the second an issue arises.

Best Practices

If you want to keep your pipelines fast, reliable, and highly secure, following industry standards isn’t just an option—it is a necessity. Honestly, a poorly optimized automation script can quickly become just as frustrating and time-consuming as the manual deployments you were trying to escape.

- Secure Your Secrets via OIDC: You should never, under any circumstances, hardcode API keys, server IP addresses, or database credentials straight into your repository. Always use GitHub Secrets. If you are an advanced AWS or Google Cloud user, consider implementing OpenID Connect (OIDC) to request short-lived access tokens rather than storing vulnerable, long-lived IAM keys.

- Cache Your Dependencies: Continuously downloading node modules, composer packages, or massive Docker layers during every single run is a huge waste of valuable computation time. Instead, use the

actions/cachetool to effectively store dependencies between your runs. Doing this can easily slice your overall deployment time in half. - Pin Action Versions: Whenever you utilize third-party GitHub Actions from the community marketplace, make sure to specify a strict version tag (something like

@v4.1.0) instead of just tracking the@mainbranch. This smart habit prevents unexpected pipeline breaks just in case the original author happens to release a backward-incompatible update. - Limit Token Permissions: You should always apply the principle of least privilege to your GitHub Actions

GITHUB_TOKEN. If a specific workflow only needs permission to read the repository code, be sure to explicitly revoke its write access to keep things secure.

Recommended Tools / Resources

If you want to squeeze the absolute maximum value out of your new automation pipelines, you might want to consider pairing GitHub Actions with these highly robust tools and platforms:

- DigitalOcean App Platform: This is absolutely perfect for deploying web applications straight from a GitHub repository, requiring practically zero manual server configuration.

- AWS CodeDeploy: An excellent choice for advanced enterprise users who desperately need granular control over their EC2 instances, auto-scaling groups, and complex Lambda functions.

- ServerPilot / Forge: These tools are ideal for developers who are tasked with managing automated WordPress and PHP deployments across custom cloud virtual machines.

- Docker: Containerizing your application is the best way to ensure that whatever you build inside GitHub Actions will run identically once it reaches your target server.

Combining these highly rated tools with strong CI CD workflows essentially guarantees a smooth, highly professional operational experience for everyone on your team.

FAQ Section

What are GitHub Actions?

Simply put, GitHub Actions is a remarkably powerful automation platform that comes natively integrated right into GitHub. It empowers developers to fully automate their build, test, and deployment pipelines straight from their source code repositories by using easy-to-read YAML configuration files.

Are GitHub Actions free to use?

Yes, they are! GitHub provides a surprisingly generous free tier. If you have public repositories, you actually get unlimited free execution minutes. Meanwhile, private repositories receive a set amount of free computation minutes every single month (currently sitting at 2,000 minutes for free-tier users). For most small to medium-sized projects, that allotment is more than enough.

Can I use GitHub Actions for WordPress?

Absolutely. You can easily use GitHub Actions to automate all of your custom theme and plugin deployments. Your customized workflows can quickly run syntax checks, compile SCSS files, and securely transfer those finished assets over to your web host using SSH, FTP, or even specialized deployment webhooks.

How do I debug a failing deployment pipeline?

The best way to debug is to review the detailed execution logs found directly inside the “Actions” tab of your GitHub repository. Every single step defined in your YAML file gets logged individually in real-time. Because of this, it becomes incredibly easy to spot the exact line of code or the specific server response that caused the whole thing to fail.

Conclusion

At the end of the day, transitioning away from manual uploads is easily one of the highest-impact investments you could ever make for your development team. It dramatically reduces the chance of human error, saves you countless hours of incredibly tedious work, and ensures that your production environments always remain stable, highly auditable, and entirely predictable.

Mastering exactly how to automate deployments with GitHub Actions gives you the power to ship code with both impressive speed and absolute confidence. My advice? Start small. Begin by implementing a basic workflow to copy files, make sure to secure your credentials using GitHub Secrets, and then gradually introduce more advanced testing gates as you grow comfortable. Take back control of your deployment lifecycle today, and fully embrace the exciting future of automated software delivery.